TL;DR

Enterprises accustomed to slow changing systems must rethink their services and competencies as more of their bureaucracy becomes software. In many cases, this means corporate services contributing to software where they used to only document business processes.

What I'm Writing

A thread on debugging and troubleshooting. When we troubleshoot, what we are really doing is investigating, forming hypotheses, and testing them in order to come to a solution.

In the Buffer

View unfiled objects in The Buffer

Uber released Orbit, a new library to facilitate applying machine learning to time series data. This isn’t the first foray from a big tech company into this space. Facebook / Meta has maintained a library for time series data, Prophet, for several years. Some expert analysts criticized Prophet as a poor abstraction or performer. In general, we don’t seem to have a great commoditization of this in open source. Many approaches have focused on scaling autoregressive models like ARIMA, such as DeepAR from Amazon Research. There has been some interesting work in the GNN space on this too. The ripest use cases for this have settled their ontologies, like predictive maintenance; use cases like supply chain resilience are harder to implement for all but the highest performers.

No Code Reviews by Default is certainly an interesting take. I certainly don’t like how reviews can sometimes hold up the flow of code into production while waiting for a review. In some cases it can take significant work to get into a state where the tests can help you sort out the changes that need review from the ones that are low enough impact to pass without four eyes. I don’t think I’ll be recommending to forego code reviews on infrastructure GitOps repositories anytime soon.

A friend reminded me of an article I shared with them. I think “Engineers Shouldn’t Write ETL” is probably a landmark essay; Magnusson posts one of the earliest articulations of the problem. Within five years, the platform ecosystem is “Red Hot”.

Marianne Bellotti’s book Kill It With Fire has been recommended to me several times now, and if that wasn’t enough, after reading their excellent post “Hunting Tech Debt via Org Charts” you better believe I’m looking forward to digging in. Bellotti’s analysis reminds me of a previously highlighted article, If You Want To Transform IT, Start With Finance.

A Malaise Falls Over Scones Unlimited

A few months after the events of our previous tale, Metadata Debt, we revisit Scones Unlimited (”The Lyft of Scones”). Our intrepid leader, ever the enterprise (and enterprising) detective, begins to notice a new problem arising. Again, the problems they start to hear about are rhyming. The game is afoot!

In her regular skip-level listening session, she gets randomly paired with an associate in her general counsel’s office. The young man is earnest, clearly hardworking, but it’s obvious that he’s deep in drudgery. For example: “we receive about 100 inquiries from the system every month, and I sort them into topic area and route them to other associates.” It’s clear that the associate has hundreds of chores like this one any given month.

In a touchpoint with her head of cloud, they confide in her that they’re carrying significant costs because the scale-down conditions on their cloud resources are too conservative. Theoretically, they built their company on the cloud so that they could take advantage of the “elastic” computing capabilities, including “scale-to-zero” and “self-healing”. In practice, they’re getting some of these benefits, but not nearly as much as they need to. In a follow up call with her CFO, the impacts are clear - cloud is a significant portion of spend at Scones Unlimited, and these inefficiencies are a drag on overall cash flow!

The Director of Scone Excellence is having trouble running experiments efficiently. Upon further examination, it turns out that the test environment has some manual steps in its deployment process, so there are hidden costs to each simulation. It’s hard to say for sure how much, but experiments are definitely slower than they need to be.

The service desk is under water. It turns out that all tickets are being manually reviewed by one of the senior architects.

All of these cases are examples of technical debt - specifically, there’s not enough automation. It turns out that there are a lot of different dimensions to automation. We’ll examine just three: the economics, the architectural considerations, and the business challenges that stop our organization from applying automation.

Automation Economics, Briefly

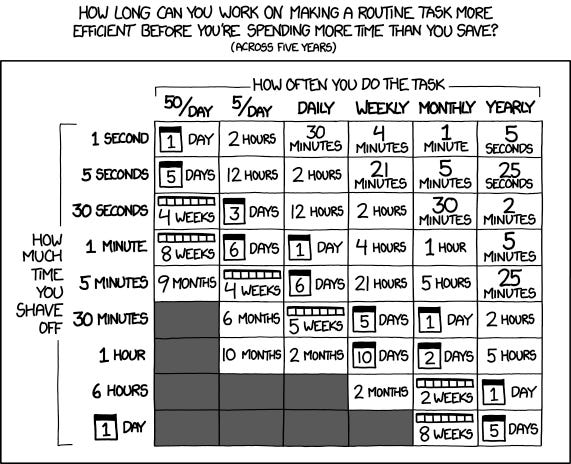

A picture is worth a thousand words:

When we think about what we want from automation, the first thing that many of us think about is time. We will “automate the boring stuff”, and get time back. We can think of this as a quantitative benefit of automation, with the person-hours saved hopefully having a beneficial impact on our cash flows. In the picture above, I think Munroe is not counting any time that is invested to make the task more efficient, and clearly he’s assuming that the same person is the sole beneficiary of the efficiencies.

The math changes in the enterprise, and the bet is more complex. To bet an hour of staff time to creating efficiencies through automation is an investment, with the goal of making everyone (or some subset of the staff) more efficient in their productive work. There is also the opportunity cost of this hour - it is an hour spent on automation, not on the core productive work of the organization.

But automation doesn’t just take us to a quantitative benefit of time saved, it can be a qualitative change and a categorical change. In the previous example, what if taking staff off of core productive behaviors to focus on automation causes us to miss a delivery milestone? Can we see an impact to customer experience, reputation, or staff morale? And the complementary story is also true; as we’ll see in the discussion of architecture, automating some processes can manifest entirely new capabilities for the enterprise.

Finally, the risks of an automation project share much in common with other technical project economics. How will falling behind schedule impact our budget? When will the value-add work products become available (i.e. when is the first dividend expected on our investment)? If Jane the senior engineer wins the lottery and leaves the company mid-project, could our investment get wiped out? How likely is that (and how much of a risk premium should I assess based on that likelihood)?

A lot of the time, none of this gets considered, either because the implementation is so cheap as to not warrant this sort of analysis, or because this sort of investment is dismissed out of hand, or because the investment is invisibly baked into other outlays, or for many other reasons.

Architectural considerations

There are several categories of automation to consider from an architectural standpoint, and they fulfil different roles for the enterprise.

Problem Management: Automating problem management has several uses. Most straightforwardly, we can automate things like helpdesks and incident response. These automations tend to get built into the systems you already use for this, but setting them up can mean the difference between manually combing through helpdesk tickets and giving end users some modicum of self-service. Automatically populating our bug tracker when customers experience errors can be another version of this. And of course, anybody who has traversed a customer service call tree knows that these approaches can also be applied in an external-facing function.

Robotic Process Automation: “RPA” has come into its own recently as an enterprise software category, but it has arguably been around for a very long time. AutoHotKey was developed almost 20 years ago. RPA is often used for freeing up nontechnical staff from drudgery, but it can also be “scaled” by developing automations that run without supervision.

Pipelines: Marketing something as a “pipeline” tool makes it more understandable for everybody. The distinguishing characteristic is that pipelines are infrastructure. Whether they are used for shepherding data from place to place, “workflow” automation integrating with many other applications, or CI/CD pipelines to help us make software, they are fundamentally emplacements on a platform. This distinguishes them from, for example, problem management tools that help humans solve one-off tasks.

Data Pipelines are pretty straightforward in this regard, they establish a script or series of steps that would otherwise be performed by a data engineer. They embed these in our infrastructure. We can instrument this infrastructure, with data validation, metadata tagging, and other tooling, so the investment can fan out.

CI/CD is an interesting example to consider - in our CI system automation debt tends to be a small part of our overall problem. Lower test coverage, imbalance in the mix of integration, unit, functional, and fuzz testing, lack of testing... none of these are really “automation debt” although we can call them technical debt. If the tests aren’t properly running automatically in the CI system, we probably have other things to consider. Continuous delivery, on the other hand, can have multiple debts running at once, covering things like secrets management, release automation, unnecessary manual gates, and many more.

Workflow automation tools, like Zapier or node-red, have significant overlap with data and CI/CD pipelines, but they have a deeper focus on integration. If a staff member needs to take some data from some system and go update four SaaS vendors, we can give them a workflow tool. (In fact, you can build a workflow tool on top of a CI/CD tool.)

Platform: Platform automation work is either very hard, or very easy. When you buy Serverless, the platform automates away a tremendous amount of work on your behalf. On the consumer side of that relationship, this is magical. Building this, on the provider side, is deeply difficult. Lots of activities can be thought of as platform automation work, like replacing a manual process with a web form, or an API.

X-as-X: In their book “Cloud Native Infrastructure”, Kris Nova and Justin Garrison delineate a progression from Infrastructure as Diagram, through “as Script”, “as Code”, and finally “as Software”. Managing our infrastructure manually (through “ClickOps”), is sometimes appropriate. Each of these stages in our automation of infrastructure is a difference in kind, not just quantity. For example, to treat infrastructure as software the organization may hav to completely let go of manual review - the control loop runs continuously in Kubernetes.

Machine Learning: Machine learning, despite the hype, is only a candidate for automating a very specific subset of processes in 2022. The key features of a machine-learning susceptible problem are factors like tolerance for error (ML systems are probabilistic), lots of good data, a sufficiently complex decision function, and many more. The associate in the GC’s office at Scones Unlimited could probably use topic modeling to sort the 100s of inquires they receive into categories, it’s probably OK if the model routes a few documents incorrectly, since counsel will realize immediately the document was misfiled when they review.

Business Challenges

We are finally left with consideration of our business challenges. A manual process may be worth automating, but the business may be accustomed to it. The staff may have the capabilities to implement the manual process, but not to automate it. The management may not be able to invest in the automation itself. The challenges we can face are numerous, but let’s focus on some core problems.

Change management: rethinking change management has deep implications for the business, since it sits at the intersection of compliance and tech. Moving from infrastructure-as-code to infrastructure-as-software has change management implications. Replatforming from an infrastructure as a service provider to a platform as a service provider does too. The business can be ready and willing to invest, the staff can be capable and skilled with the new technology, but if the change management regime can’t adapt, we can’t go forward.

Changing service functions: Some of the qualitative changes that these automation maneuvers bring will challenge our assumptions about what various corporate services do. Does marketing hire data scientists? Does IT write software? Does Dev do Ops? Can Legal learn how to use GitHub? Plenty of the time these changes are not worth the effort, but even when they are, it’s very hard to commit to this sort of change and see it through.

Risk and Failure: in our consideration of the economics, we can assess a risk premium to represent the probability and cost of failure, overruns, and other problems. Unfortunately, this doesn’t capture the nature of failure and risk in the way our business really works. Can the business cope with significant risk of failure when it contemplates the investment? Is there a culture of experimentation, and more pointedly will the career prospects on the team suffer if the automation project fails? The project could succeed to automate the manual process, only to find that staff continue with the manual solution and ignore the new automation tool.

Scaling Away

Scones Unlimited, our intrepid automation-starved enterprise, wants to become a scaled, global powerhouse in scone logistics. That should be a big factor in how they evaluate their automation debts. Not every organization is on a mission to become the next FAANG, so they need less automation overall, and they can think differently about their automation debts than an aspiring hyperscale organization. Still, organizations should understand their lack of automation as technical debt, because it’s the right mental model. Even in the pessimistic view that says “we never pay down technical debt, it’s always secondary to feature work”, this puts automation in the right bucket, and lets us view the portfolio more clearly.